Data-Driven Security Research

Understanding how security can be improved fundamentally requires the ability to measure the effectiveness of existing approaches. However, practical experience suggests that security metrics and models exhibit a low level of correlation with vulnerabilities and attacks and they do not adequately capture the capabilities of adversaries. This is particularly true when investigating phenomena in large-scale deployments of systems in active use, which do not conform to development-time abstractions. In such systems, security is a moving target as attackers exploit new vulnerabilities (to subvert the system’s functionality), vendors distribute software updates (to patch vulnerabilities and to improve security) and users reconfigure the system (to add functionality).

In my research group, we build systems that provide practical and measurable security guarantees and that are grounded in a rigorous understanding of the motivations, capabilities and limitations of real- world adversaries. Many of our research projects use the WINE platform, which I built during my time at Symantec Research Labs [BADGERS 2011][CSET 2011][EDCC 2012]. WINE loads, samples and aggregates multiple data feeds, originating from millions of hosts around the world, and keeps them up-to-date. This platform provides the research community with unique opportunities for conducting empirical research in security.

Some of the research results from this project are described below.

Detecting malware with downloader graph analytics

We characterized the client-side infrastructure of malware delivery networks, by reconstructing the downloader-payload relationship among the executable files found on a host and by analyzing the downloader graphs generated by this relationship [CCS 2015]. Using graph mining techniques, we showed that we can distinguish between malicious and benign download activity and that we can detect malware an average of 9 days ahead of current anti-virus products.

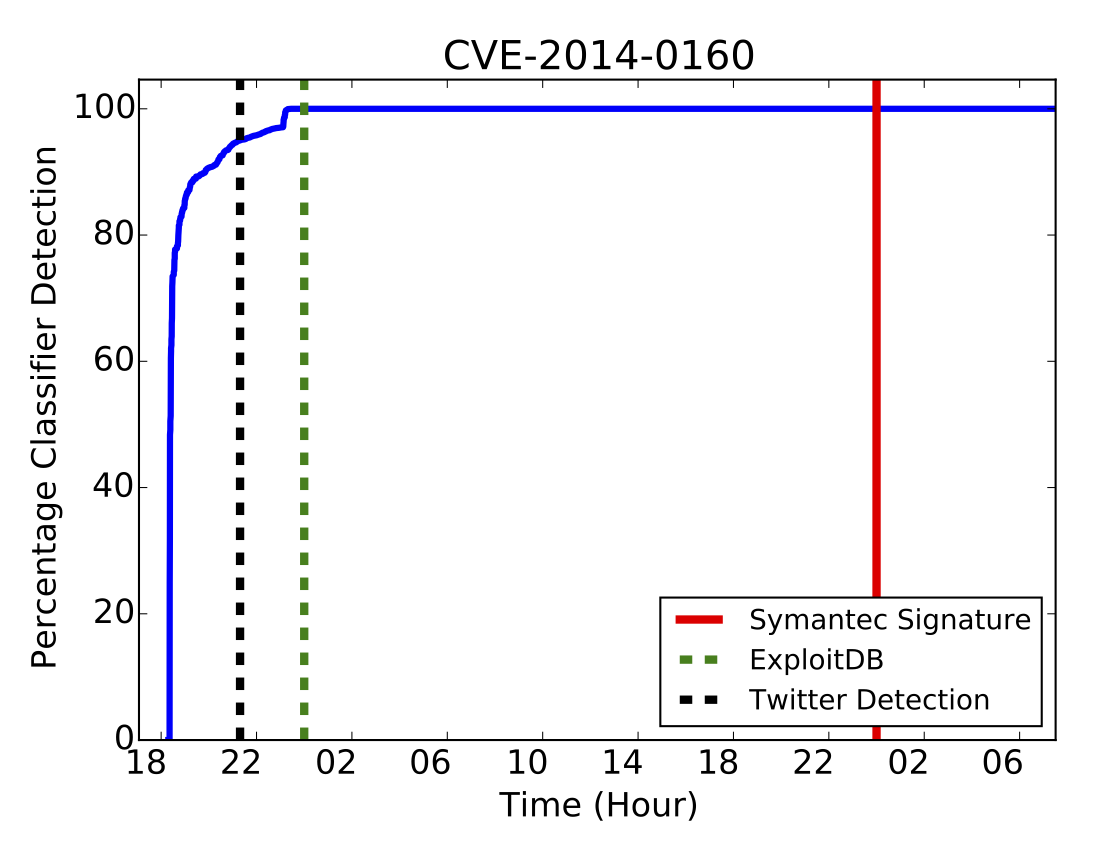

Predicting vulnerability exploits with Twitter analytics

We built a machine learning system for predicting which vulnerabilities are going to be exploited in the wild [USENIX Security 2015]. This system uses information extracted from Twitter, where hackers and security researchers post technical details about exploits and the victims of attacks share their experiences.

The decay rate of vulnerabile host populations

We measured the rate of patching for 1,593 vulnerabilities. These are vulnerabilities in client-side applications (e.g. browsers, document readers and editors, media players), and their decay is difficult to measure with the methods used in prior work (e.g. network scanning). We observed significant differences among hosts and vulnerable applications, and we identified several patterns of patching. We also found that a host may be affected by multiple instances of the same vulnerability (e.g. because the vulnerable program is installed in several directories or because the vulnerability is in a shared library distributed with several applications), and often some instances are patched while others are left vulnerable.

Certificate reissues and revocations in the wake of Heartbleed

We measured how quickly the SSL certificates that may have been compromised by Heartbleed vulnerability in OpenSSL were revoked and reissued after the vulnerability was disclosed [IMC 2014]. A common assumption is that these operations occur at the same time, as reissuing compromised certificates without revoking them would not prevent attackers from conducting phishing and man-in-the-middle attacks. However, we found a significant gap between the rate of reissues and the rate of revocations. 73% of vulnerable certificates had yet to be reissued and 87% had yet to be revoked three weeks after Heartbleed was disclosed.

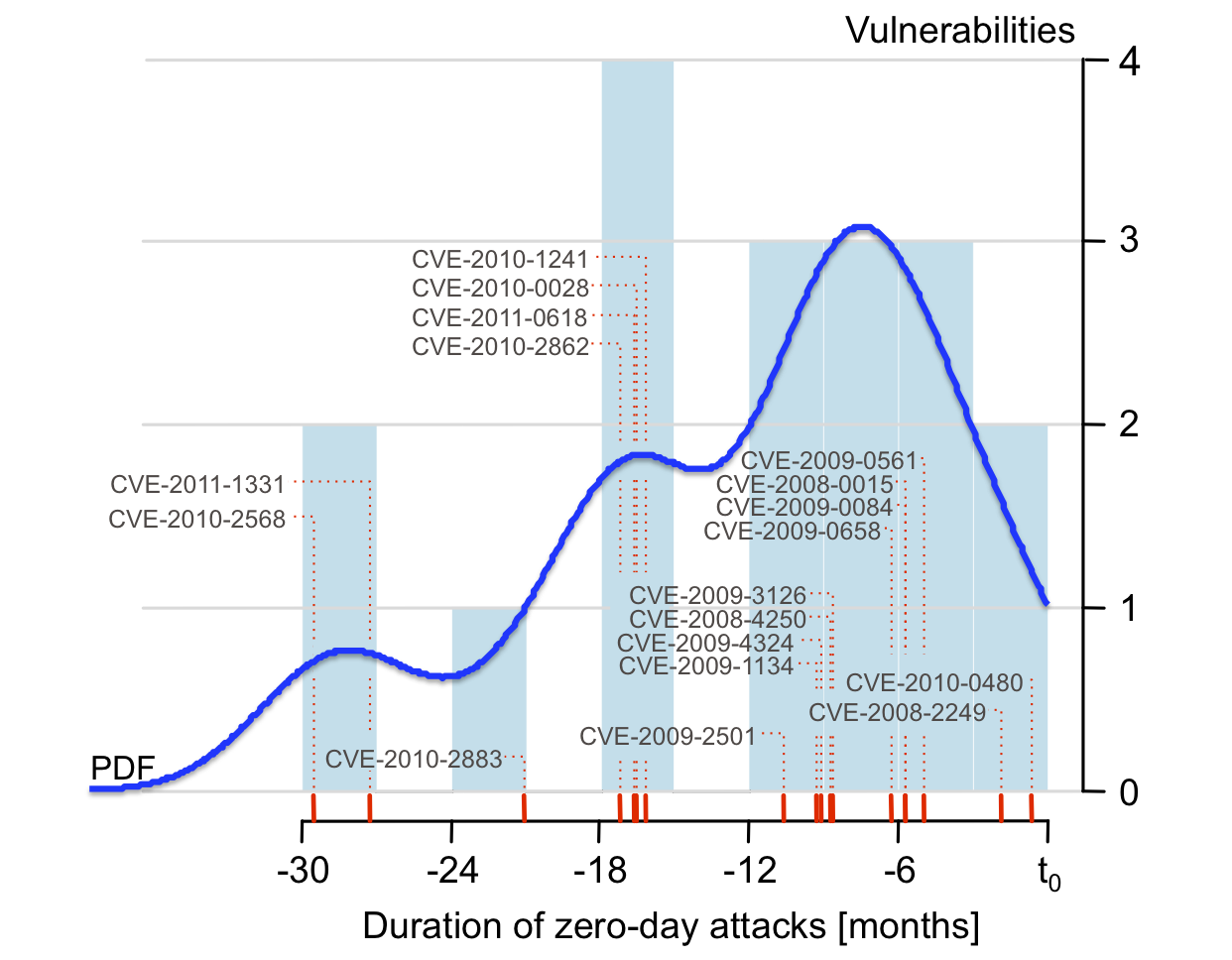

The duration and prevalence of zero-day attacks

We used WINE to measure the duration of 18 zero-day attacks, from field data collected on 11 million hosts worldwide [CCS 2012]. These attacks lasted between 19 days and 30 months, with a median of 8 months and an average of approximately 10 months (these numbers represent lower bounds rather than precise estimations). 11 of the vulnerabilities identified were not previously known to have been employed in zero-day attacks.

References

[CCS 2015] B. J. Kwon, J. Mondal, J. Jang, L. Bilge, and T. Dumitraș, “The Dropper Effect: Insights into Malware Distribution with Downloader Graph Analytics,” in ACM Conference on Computer and Communications Security (CCS), Denver, CO, 2015, pp. 1118–1129.

PDF[USENIX Security 2015] C. Sabottke, O. Suciu, and T. Dumitraş, “Vulnerability disclosure in the age of social media: Exploiting Twitter for predicting real-world exploits,” in USENIX Security Symposium (USENIX Security), Washington, DC, 2015.

PDF[IMC 2014] L. Zhang, D. Choffnes, T. Dumitraș, D. Levin, A. Mislove, A. Schulman, and C. Wilson, “Analysis of SSL Certificate Reissues and Revocations in the Wake of Heartbleed,” in ACM Internet Measurement Conference (IMC), Vancouver, Canada, 2014.

PDF[CCS 2012] L. Bilge and T. Dumitraș, “Before we knew it: An empirical study of zero-day attacks in the real world,” in ACM Conference on Computer and Communications Security, Raleigh, NC, 2012, pp. 833–844.

PDF[EDCC 2012] T. Dumitraș and P. Efstathopoulos, “The Provenance of WINE,” in European Dependable Computing Conference (EDCC), Sibiu, Romania, 2012, pp. 126–131.

PDF[CSET 2011] T. Dumitraș and I. Neamtiu, “Experimental Challenges in Cyber Security: A Story of Provenance and Lineage for Malware,” in USENIX Workshop on Cyber Security Experimentation and Test (CSET), San Francisco, CA, 2011.

PDF[BADGERS 2011] T. Dumitraș and D. Shou, “Toward a standard benchmark for computer security research: The Worldwide Intelligence Network Environment (WINE),” in ACM Workshop on Building Analysis Datasets and Gathering Experience Returns for Security (BADGERS), Salzburg, Austria, 2011.

PDF